Managed SaaS releases¶

April SaaS feature announcements¶

April 2026

This page provides announcements of newly released features available in DataRobot's SaaS multi-tenant AI Platform, with links to additional resources. From the release center, you can also access past announcements and Self-Managed AI Platform release notes.

Agentic AI¶

New and retired LLMs¶

Below is a list of LLM availability changes for April 2026. See the availability page for a full list of supported LLMs. As always, you can add an external integration to support specific organizational needs.

The following are newly available:

- Claude Sonnet 4.6, available for Amazon Bedrock, Google Gemini Enterprise Agent Platform (formerly Vertex AI), and Anthropic.

The following models are retired or will be retired:

Google Gemini Enterprise Agent Platform (formerly Vertex AI)

- Claude Haiku 3: August 23, 2026

- Meta Llama 4 Maverick: Retired

Anthropic

- Claude Opus 4: September 13, 2026

- Claude Opus 4.1: September 13, 2026

- Claude Haiku 3: Retired

TogetherAI

- Mistral Mixtral-8x7B Instruct v0.1: Retired

DataRobot Global MCP server¶

With this release, DataRobot implements its Global MCP server, a persistently-deployed MCP server that can be accessed via its endpoint URL: https://{DATAROBOT_URL}/api/v2/genai/globalmcp/mcp (substitute your deployment's URL). The initial release provides access to DataRobot's predictive AI tools, with further tools to be added in future releases. For more details on how to configure your tools to access the server, see Connect agentic coding environments to MCP servers.

Predictive AI¶

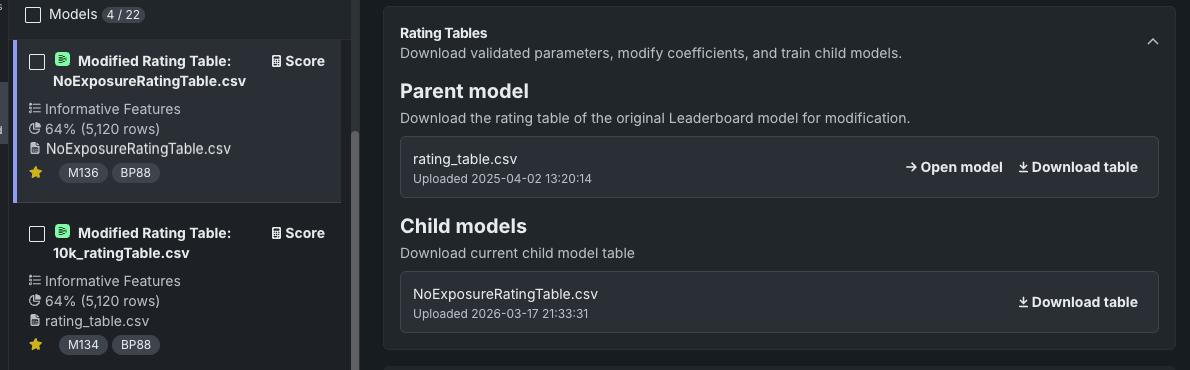

Rating tables now available in NextGen¶

Available for GAM and GA2M models, rating tables help provide a transparent view of a model, showing which features were chosen to form the prediction, their importance, and whether they had a positive or negative impact on the outcome. Download validated model parameters as a CSV file, modify the coefficients, and apply the new CSV to the original (parent) model. This creates a child with the new parameters, which can be accessed from the Leaderboard. Compare and iterate on models after seeing the impact of your changes.

MLOps and predictions¶

Databricks integration for batch predictions¶

Databricks is now directly supported as an intake source and output destination for batch prediction jobs, without relying on JDBC connectors.

Code first¶

Python client v3.15¶

Python client v3.15 is now generally available. For a complete list of changes introduced in v3.15, see the Python client changelog.

DataRobot REST API v2.44¶

Version 2.44 of the DataRobot REST API is now generally available. For a complete list of changes introduced in v2.44, see the REST API changelog.

All product and company names are trademarks™ or registered® trademarks of their respective holders. Use of them does not imply any affiliation with or endorsement by them.